Pr Virginia Tsapaki, International Atomic Energy Agency (IAEA) expert and medical physicist hosted a session to learn about the ethics guidelines for trustworthy AI and what the EU AI Act will bring to the future. Speaker Hugh Harvey (UK), a radiologist and Managing Director at Hardian Health offered welcome insights on what’s new in AI regulation.

Three use cases for AI in Radiology

Deep-learning supported image enhancing was the first AI technology to bring impact to imaging. Recognized as a medical device, it falls under regulations: do’s and don’t’s are clearly delimited. The use of large-language-models (LLMs) is more recent and many questions are still open. Using an LLM and generative AI to automatically draft radiology reports from findings is definitely AI, yet might not be recognized as a medical device, as it only generates impressions from findings inputted by a specialist. Finally, recent forays into LLMs applied to the direct interpretation of images by AI, and the fully automated generation of findings and radiology reports open the door to new regulatory challenges.

General purpose generative AI is not regulated

As of today, medical use of general purpose Al is totally unregulated, but the EU AI Act will change that. This extensive legislation with far-reaching implications is crucial for radiologists, academics, and healthcare and will focus on regulatory framework, risk categories classification, and compliance. The text is now in final text status, awaiting adoption. Once adopted, a transitional period for compliance will allow medical device manufacturers to react and adapt to this impactful regulation.

Under the AI Act, medical or in vitro diagnostic medical devices that are Al-powered or incorporate Al as a safety component come under both the MDR/IVDR and the Al Act.

The Al Act stipulates additional considerations for AlaMD manufacturers in order to further minimise 'risks and harms' of Al.

Four new risk categories for AI devices

Minimal: AI systems with minimal risk may be developed and used without additional legal obligations. However, codes of conduct may be encouraged to apply mandatory requirements voluntarily.

High: Medical AI devices, such as those involved in clinical decision support or diagnosis, fall under the high-risk category. This category includes stringent requirements due to the critical nature of healthcare applications.

Limited: Limited risk Al includes systems that interact with humans through chatbots, emotion recognition, or those that generate or manipulate image, audio, or video content. Transparency obligations apply in this category.

Unacceptable: The EU AI Act introduces an unacceptable risk category, which is aimed at safeguarding against potential use cases with severe societal consequences. Al systems that rank people based on scoring systems for decisions like job or housing eligibility are prohibited in this category.

Regulatory timelines allow for discussions, negotiations and staggered implementation

April 2021: Proposal

The European Commission proposed the EU AI Act, setting the stage for comprehensive Al regulation in the EU.

2021-2023: Negotiations

Negotiations between the European Parliament and the European Council began, and they approved the draft for AI Act in 2023.

Late 2023: Trilogues

Trilogues involve discussions between the European Commission, European Parliament, and the European Council to refine and finalize the legislation.

2024: Implementation

Draft is assumed to be signed into law in May / June pending translation and dissemination.

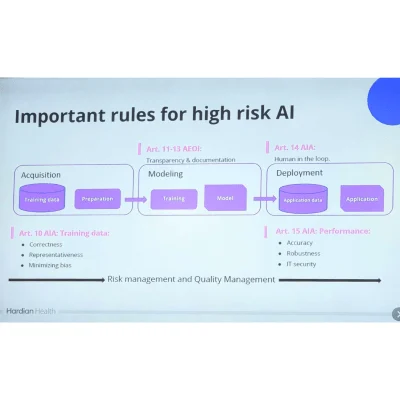

Regulation impact on AlaMD manufacturers: more documentation

Systems classified as High Risk will have specific obligations in relation to:

- reporting requirements to consumers

- transparency to users

- data protection and governance

- technical documentation

- record keeping

- risk management

- human oversight

- robustness, accuracy and security

The AI Act underlines four regulatory mechanisms:

Conformity Assessment will ask manufacturers to undergo CE marking. Any Al Act relevant standards (e.g. ISO/IEC FDIS 42001) will be audited at the same time as MDR documentation. Providers of AI systems must also ensure transparency and provide information to users about the system's nature, purpose, and the existence of human oversight. Change control is also in focus, the AI Act allows certain predefined changes to machine learning algorithms without requiring new conformity assessments. Finally, the regulation outlines the need for measures for market surveillance and enforcement, empowering designated regulators in EU Member States and the European Artificial Intelligence Board at the EU level.

In the case of non-compliance, the Act allows for device recall, and penalties can apply.

A breach of prohibition on unacceptable risk Al systems or data governance provisions for high-risk Al systems can yield a penalty of up to the higher of EUR 35 million, or 7% of the total worldwide annual turnover. Non-compliance with any other requirement under the Act will result in up to the higher of EUR 15 million, or 3% of the total worldwide annual turnover. And finally supplying incorrect, incomplete, or misleading information to notified bodies and national authorities can lead to up to the higher of EUR 7.5 million or 1% of the total worldwide annual turnover.

The case for medical LLMs regulations

Whether LLMs are developed specifically for a medical usage, or if it’s general purpose generative AI being applied to clinical need, they come under the MDR and AI Act. Yet, LLMs pose specific regulatory challenges:

- Can't use open source models by lack of quality documentation

- Infinite inputs/outputs make quality assurance impossible

- Unknown cybersecurity risks

- Most LLMs are trained on copyrighted material and are not GDPR compliant

- Larger models > 10^25 FLOPs like ChatGPT pose 'systemic risks' as outlined in the Act

- Such tools are non-deterministic, thus challenging for clinical studies

- Notified bodies have no experience yet auditing this technology

EU AI Act took steps to allow for regulation in the patients’ interest while not crushing innovation under too strict rules.

Microenterprises may fulfil certain elements of the quality management system required by Art17 in a simplified manner (Art 55a). The EU AI Office shall provide standardised templates for the areas covered by the Regulation, and organise specific awareness raising and training activities on the application of the Regulation tailored to the needs of SMEs (Art 55). Articles 53 and 55 offer priority access for startups and SMEs to a controlled environment that fosters innovation and facilitates the development, training, testing and validation of innovative Al systems. Finally, The specific interests and needs of the SME providers, including start-ups, shall be taken into account when setting the fees for conformity assessment proportionate to their size, market size and other relevant indicators (Art 55).