ICU Management & Practice, Volume 16 - Issue 1, 2016

The significance of research or researchers is frequently discussed and debated, so also in the medical research field. Why do we publish? This straightforward question is often difficult to answer, at least in a simple way. As a starter we can list some reasons:

- We wish to make a difference for the outcome of our patients;

- We have an obligation to do research because of unanswered questions;

- We have to do it as part of a training programme or institutional requirement;

- We want to be “visible” in the scientific community;

- We want to get funding for future research;

- Curiosity.

In order to measure the impact of research, different methods are available. They span from subjective, personalised judgement of a total scientific contribution, as often performed in a research grant application or application to an academic position — to use of various “objective” numeric variables linked to publications.

The simplest method to use is of course merely to add up the number of publications, but we all know that there is more to research than just counting numbers.

In this field there are several stakeholders, the two most important being the journals and the authors (Scientists), and instruments to measure one are not automatically suitable for the other group.

Bibliometry

Bibliometry is the scientific field devoted to the development and use of various analytic methods to study literature and authorship by using purely statistical criteria. As such there is no formal evaluation of its content, with the exception of the categorisation of research field and type of publication. Bibliometry has developed considerably over the last decades, and is used extensively both for scientific journals and researchers/authors.

Journal Impact Factor

The most known bibliometric quota is a measure of the importance of a journal, more commonly known as the Journal Impact Factor (JIF) or just IF (Garfield 2006). However, it is important to emphasise that this is purely a description of the journal itself, and IF is only indirectly linked to the contribution of the individual authors. The JIF is described with a number, and this number is calculated for each year using the number of citations of articles from that journal in the current year (nominator) divided by the number of published articles (from the same journal) in the previous two years (denominator).

Example

If articles from a specific journal were cited 1200 times in 2014, and that journal for 2012 and 2013 had published 400 papers, the JIF is 1200/400 = 3.0.

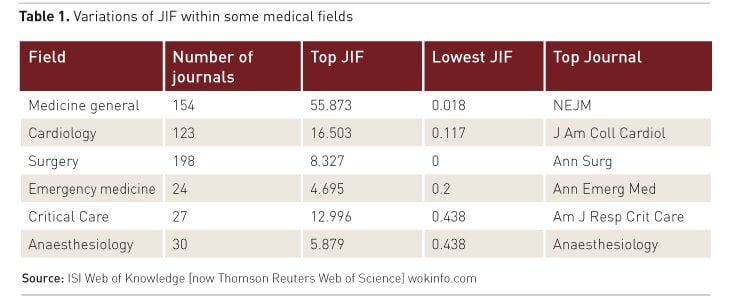

Hence it is easy to see that the JIF is influenced by the number of citations and the number of published items. To increase the JIF there has to be an increase in citations, or a decrease (absolute or relative) in the number of articles. This also means that two journals with the same absolute number of citations (nominator) can vary a lot with regards to their JIF according to the denominator. For example, Intensive Care Medicine had for 2014 an IF of 7.214 with 16128 citations, while Critical Care Medicine had an IF of 6.312 but with 33132 citations! Increasingly, the IF has been used to rank journals, and those with a high IF are usually perceived to be the most important ones. However, from field to field IF varies a lot, in particular within medical science. Table 1 shows the JIF within some important medical fields and its huge variations. Only very few medical journals have an IF > 20, and they are mostly general in nature (not field-specific).

The JIF can be altered in various ways. One is to increase self-citations. An example is to use the “Year-in-review” type of publications some journals publish the following year. By citing a number of their own publications from the year before this will have an impact (small or large) on the nominator that year. The other is to have as few “citable” items as possible. A recent analysis has shown that less than 25% of all published items in some journals were citable items (McVeigh et al. 2009).

It is important to underscore that JIF was not developed to rank individual authors (Scientists). The JIF is simply the mean number of citations from a two-year period, so a JIF of 3.0 means each article (from the preceding two years) on average was cited 3 times the following year. But this average is not necessarily meaningful for the individual authors of a specific article; they can be cited more than 50 times or never, and the individual impact of these two extremes is of course very large.

Other Bibliometric Methods

Hence within bibliometry other methods have been developed to better describe the contribution of a single article, and thereby better to reflect the individual author(s). These methods should be increasingly used in this context, and not just the use of JIF in an inappropriate setting.

One of the most interesting is of course the total number of citations of an individual author. This means the sum of how many times his or her publications are cited. Obviously this better reflects individual performance, although this method also has its weaknesses. It could happen, for example, that in a portfolio of 100 articles and 1500 total citations, one of them was cited 500 times, and the other 99 articles 1000 times. This means that the number of citations can be heavily influenced by a few very “popular” articles, while the rest are less cited (or less “popular”). To overcome this, the so-called h-index was developed in 2005 (Hirsch 2005), and has become increasingly popular. The h-index is not immediately intuitive, as the definition goes like this:

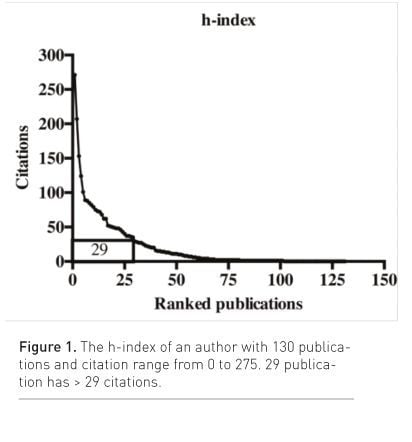

A scientist has an index of h if h of his or her Np papers has at least h citations, and the rest (Np-h) have < h citations each.

Put into numbers, if a scientist has an h-index of 29, this means that 29 of his or her papers are cited more than 29 times, and the rest ≤ 29 times (fig. 1). It is then understood that the higher the h-index is, the more “impact” that author has.

One advantage with the h-index is that it is more representative for the whole “production line”. Outliers like a couple of highly cited publications, or some low cited ones will not substantially affect the number. In the field of anaesthesiology, it has been shown that the h-index is a good indicator for academic activity (Pagel et al. 2011).

Still, there are deficits with both total citation and the h-index with regards to individual scientists. Different databases may come up with a different h-index for the same author, and it is often found to be higher using Google Scholar than other bibliometric databases. To compare individual authors the same database must be used.

These measures do not take into account the number of authors of a single publication, nor the sequence of the individual authors; all are given equal credit. Today, not infrequently, there may be 10-20 authors or more on a single paper, and the individual contribution to the work may sometimes be difficult to see. Usually also the first and last position are considered more important. The first one as the main researcher, and the last usually the more senior or supervisor of the project. Bibliometrics to illustrate such differences are not frequently used, and often have to be retrieved manually.

Another interesting aspect is the development over time. Is the publication line increasing, decreasing or on a steady state? In order to analyse this dimension, the number of publications or citations per year must be set up, and this is sometimes done automatically in some bibliometric databases.

The Future

What will the future bring? The rapid development of web-based resources will probably alter the way we look at individual authors and scientists—based on the assumption that the significance of a publication usually is not counted in citations, but also that it is downloaded, read, and ultimately of course that its scientific and clinical findings may improve patient care. This brings bibliometrics to another dimension; we could maybe call this “publiometrics”.On the web we now find portals where research and publication is displayed and discussed. ResearchGate (researchgate.com) is just one of many portals where individual scientists may gather information, and the system then calculates different metrics to describe individual performance. The system also automatically screens for new publications that can be added to the portfolio. Research- Gate tracks the popularity of your publications as “reads” (how many read/download your publication) in addition to the total citations. They have also introduced their own impact points based on a number of inputs, including downloads and web discussions. The number of downloads of an individual paper may also be found on the homepage of individual open access journals like Critical Care.

Increasingly social media like Twitter are used to distribute research and publications (engineering. twitter.com). The number of tweets may in the future also be used as a “publiometric” (Chatterjee and Biswas 2011). Several medical journals now send out tweets simultaneously with publication in order to increase awareness of the event.

Conclusion

To end this short presentation of bibliometry, it is important to realise that IF is a measure of the impact of a journal, and not suited to describe individual researchers. The latter group should be evaluated using several techniques, including the h-index, the total number of citations, citation profile with time and the place in the row of authors if applicable. The importance of a paper is also increasingly reflected in downloads and comments and likes on web-based media.

References:

Chatterjee P, Biswas T (2011) Blogs and Twitter in medical publications – too unreliable to quote, or a change waiting to happen? S Afr Med J, 101(10): 712,14. PubMed↗

Garfield E (2006) The history and meaning of the journal impact factor. JAMA, 295 (1): 90-3. PubMed↗

Hirsch JE (2005) An index to quantify an individual’s scientific research output. Proc Natl Acad Sci, 102(46): 16569-72. PubMed↗

McVeigh ME, Mann SJ (2009) The journal impact factor denominator: defining citable (counted) items. JAMA, 302(10): 1107-9. PubMed↗

Pagel PS, Hudetz JA (2011) H-index is a sensitive indicator of academic activity in highly productive anaesthesiologists: results of a bibliometric analysis. Acta Anaesthesiol Scand, 55(9): 1085-9.