ICU Management & Practice, Volume 16 - Issue 4, 2016

The last two decades have seen an accelerated interest in quality management in healthcare in general, and also in intensive care specifically. Often safety has been the main issue, but increasingly a more general approach to quality has emerged, in particular with a focus on quality indicators (QI).

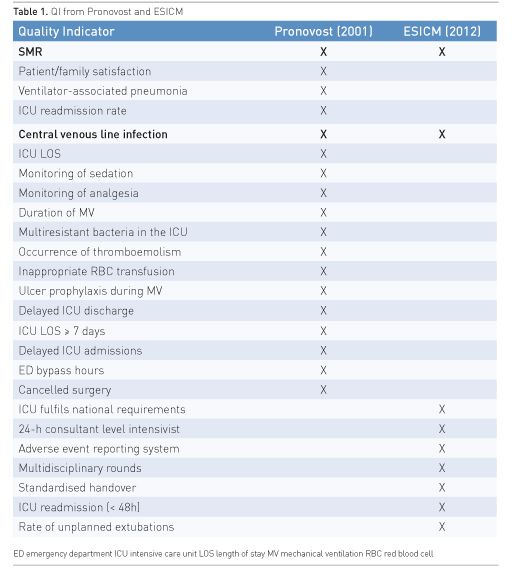

It is now more than 15 years since Pronovost and co-workers approached this area systematically and published a list (Table 1) of what they considered to be core QI for intensive care (Pronovost et al. 2001). Later fundamental work in this area was followed up in the Netherlands, which resulted in the publication of the development of QI for Dutch intensive care units (ICUs) in 2007 (de Vos et al. 2007). Spain (2006) and Sweden (2006) were out early with web publication of their lists of QI for intensive care.

In 2012 the European Society of Intensive Care Medicine (ESICM) published the development of what they considered to be 9 core QI for Intensive care (Rhodes et al. 2012); also shown in Table 1. Apparently the views on what are considered important QI have changed over time, and only two of the total 27 QI are the same: standardised mortality ratio (SMR) and blood-borne infections (BBI). This is an interesting observation, and demonstrates that what was considered important in the first place is not necessary perceived the same way at a later stage.

Indicator or Standard?

These terms are often used interchangeably, but do they reflect the same content? In the Quality Assurance “bible” Avedis Donabedian in fact does not discuss the term indicator, and in particular not the Quality Indicator (Donabedian 2003). He defined criteria and standards, the latter defined as “a specified quantitative measure of magnitude or frequency that specifies what is good or less so”. In most ways this is what we today perceive as a QI. On the other hand, the UK’s National Institute for Health and Care Excellence (NICE) has somewhat different definitions: a quality standard is a statement to help improve quality, and an indicator is a measure of outcomes that reflect the quality of care, or process linked, by evidence, to improved outcome (NICE n.d.).

Today, most countries actively using QI prefer Donabedian’s method to assess clinical performance, and hence use three different classes of QI:

1. Structure

2. Process

3. Outcome

The structure QI is very similar to what other organisations may refer to as standards: either you fulfil it or not. An example: An ICU must have a system for reporting adverse events: Yes or No.

The process QI includes treatment, diagnoses, prevention etc. and is often given as a percentage. An example: The time for start of antibiotic therapy should be < 1 hour in more than 90% of cases with suspected sepsis.

The outcome QI describes real results like various changes in health status. An example: The SMR should be less than 0.8.

Status in Europe

Use of QI at a national level (NQI) has increased, and in Europe at least 9 countries today have published their list of ICU QI either on web or in a formal publication.

In 2012 the author conducted a search about the current use of NQI in Europe (Flaatten 2012), and the present update was performed in order to reveal further development in the field with the main focus on Europe, and with particular attention to documented results of use of NQI. Two more countries have since the publication established intensive care medicine as a primary speciality: UK and Ireland, and it was of particular interest to see if this major initiative had resulted in new or revised quality indicators.

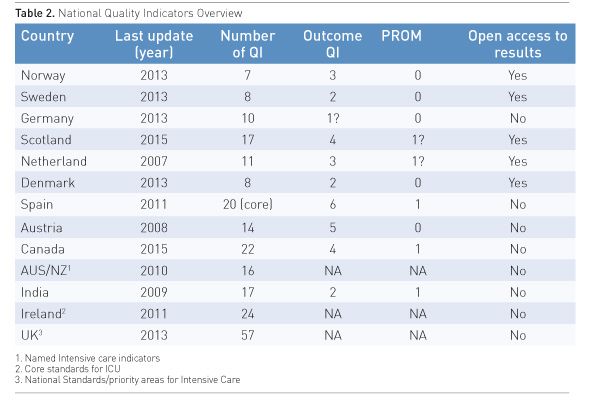

The updated list is shown in Table 2, where the year of last revision is given. Ten countries (7 from Europe) had published their list of QI either as a publication or on the web. Three more have by personal communication given data. The number of outcome QI and if the QI includes patient-reported outcome measures (PROM) and if data on QI are published openly are also given.

Some national health services now require their healthcare providers to report back PROM in various areas of healthcare. This is now mandatory in Norway, and was introduced in the English National Health Service in 2009 with yearly publications of results (National Health Service 2015). Open access to results, also down to the level of individual hospitals, has been a demand in many northern European countries. The argument has been: since healthcare is publicly funded (by tax) the public has a right to know the results.

At present, the UK NICE has no specific standards or indicators published for intensive care, but the Faculty of Intensive Care Medicine (UK) with a number of other relevant UK societies has published core standards for ICUs, which they all require their ICUs to comply to (Faculty of Intensive Care Medicine 2015). Many of these standards are what are called process or structure quality indicators, so the difference is in reality not large. Ireland has a similar system with its National Standards for Adult Critical Care, a less comprehensive list but similar in structure to the UK (Joint Faculty of Intensive Care Medicine of Ireland 2015). At present neither country has introduced formal QI as in other European countries.

These standards are organised differently from ordinary QI and are included in Table 2 for the reason of completeness.

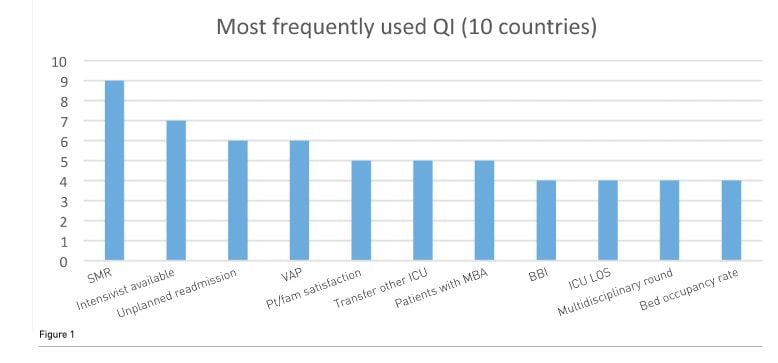

It is interesting to see that there seems to be a great diversity in regards to what QI are chosen by individual countries. None are common for all: the ones used by most are the SMR (9/10) and the availability of an intensivist in the ICU (7/10). Most QI are only used by 1-2 countries. Figure 1 shows the QI used by more than 4/10 countries. The results may indicate that more countries have started to use indicators or standards to measure the quality of their healthcare and also intensive care. However, still many countries in Europe do not have such a system in place. The reasons for this are probably multifactorial. They may in fact have a system, although it was not detected/documented by this search (if so please contact the author). There may in addition be a language barrier for this; the Norwegian Intensive Care registry only documents on the web in Norwegian, which of course is a problem to access for non Norwegians. Another reason may be a high fraction of private healthcare in a country, which of course would make introduction of such bench-marking more demanding. The last could be cultural, with laws and regulations making such data difficult to retrieve and probably publish.

Conclusion/Future

The use of QI at a national level is a suitable method to focus on quality in healthcare. Independently of public access to the results, a local or national ICU network will have a lot to gain from engaging in the process of first finding and defining QI and later retrieval of data. This can also be used in a more stringent way by benchmarking, but even without such formal listing, comparable units will immediately spot if they are deviating from the mainstream. Those units with particularly good results can be approached in order that others can learn from their experience. Using national QI in this way, the quality circle: Plan, Do, Evaluate, Change can be put into action, and hopefully contribute to improved healthcare.

Abbreviations

BBI blood-borne infections

ICU intensive care unit

LOS length of stay

QI quality indicators

SMR standardised mortality ratio

VAP ventilator-associated pneumonia

References:

de Vos M, Graafmans W, Keesman E et al. (2007) Quality measurement at intensive care units: which indicators should we use? J Crit Care, 22(4): 267-74.

PubMed ↗

Donabedian A (2003) An introduction to quality assurance in health care. New York: Oxford University Press.

Faculty of Intensive Care Medicine, Intensive Care Society (2015) Guidelines for the provision of intensive care services. London: Faculty of Intensive Care Medicine & Intensive Care Society. [Accessed: 29 September 2016] Available from ics.ac.uk/ics-homepage/latest-news/guidelines-forthe-provision-of-intensive-care-services

Flaatten H (2012) The present use of quality indicators in the intensive care unit. Acta Anaesthesiol Scand, 56(9): 1078–83.

PubMed ↗

Joint Faculty of Intensive Care Medicine of Ireland (2011) National standards for adult critical care services. [Accessed: 29 September 2016] Available from jficmi.ie/wp-content/uploads/2015/05/Appendix-9-JFICMI-ICSI_Minimum_Standards.pdf

National Institute for Health and Care Excellence (n.d.) Standards and indicators. [Accessed: 29 September 2016] Available from nice.org.uk/standards-and-indicators

NHS Digital (2015) Finalised patient reported outcome measures (PROMs) in England - April 2014 to March 2015 [Accessed: 29 September 2016] Available from gov.uk/government/statistics/finalised-patient-reported-outcome-measures-proms-in-england-april-2014-to-march-2015

Pronovost PJ, Miller MR, Dorman T et al. (2001) Developing and implementing measures of quality of care in the intensive care unit. Curr Opin Crit Care, 7(4): 297-303.

PubMed ↗

Rhodes A, Moreno RP, Azoulay E et al. (2012) Prospectively defined indicators to improve the safety and quality of care for critically ill patients: a report from the Task Force on Safety and Quality of the European Society of Intensive Care Medicine (ESICM). Intensive Care Med, 38(4): 598-605.

PubMed ↗