Authors

Prof. David A. Koff

Associate Professor and Chair, Department of Radiology,

McMaster University, Radiologist-in-Chief, Diagnostic Imaging,

Hamilton Health Sciences

HealthManagement Editorial Board member

[email protected]

Dr.Nadine Koff

President and co-founder

Real Time Medical

Toronto, Ontario, Canada

“peer review must be an educational and non punitive experience leading to overall improved delivery of care”

Key Points:

- A number of high profile radiologists’ reviews in Canada have shaken the public’s trust in radiology.

- The need for peer review solutions is obvious in order to protect patients and radiologists alike

- Retrospective and more recently prospective peer review solutions are implemented.

Incorrect interpretation of studies is the leading cause of malpractice lawsuits against radiologists. Regulators expect a definition of acceptable levels of performance amongst radiologists even if there is no enforceable consensus on any admissible error rate that could be used.

Over the past few years, Canada has been swept by a wave of highly publicised reviews of radiologists, from New Brunswick to British Columbia (BC), through Quebec and Saskatchewan. These affairs have made the front page of the national newspapers, and could be followed on all major audiovisual media. Triggered by hospital chiefs of staff or regional health authorities, to which erroneous radiology reports have been notified, extensive reviews have been undertaken, with tens of thousands of cases submitted to a second reading. In some cases, the reviews found high rates of misses, up to 30% of CTs for one radiologist on the West Coast, but in other cases, the rates of misses were very low.

This is of course a devastating experience for the radiologists incriminated, sometimes for very few misses, leading to shameful ends of careers or at best early retirements. But even if these reviews have been going on for several years, there has been very little action taken by the radiology community to remedy this situation. The need for peer review solutions became obvious in order to protect patients and radiologists alike.

The Cochrane Report

After an infamous review of thousands of CTs in British Columbia, which found an exceptionally high amount of errors, the BC Minister of Health Services requested in February 2011 an independent two-part investigation into the quality of diagnostic imaging, and asked Dr. Doug Cochrane, Provincial Patient Safety and Quality Officer, to lead this review. The first part of the report centred on the credentials and individual experience of the radiologists involved. The second part of the report provided a description of the events, a review of quality assurance and peer review of medical imaging, a review of physicians’ licensing and credentialling, and issued 35 recommendations (Cochrane 2011). Among the recommendations pertaining to the provision of diagnostic imaging services, six were specific to Quality Assurance and Peer Review in diagnostic imaging (recommendations numbers 15 to 20) (see Table 1).

Table 1. Cochrane Report Recommendations

The Cochrane report recommended, inter alia:

- That the health authorities and College develop a comprehensive and standardised retrospective peer review process that can be used in the regional health system or the private facilities;

- That the Provincial Medical Imaging Advisory Committee establish a provincial management system to oversee diagnostic imaging retrospective peer review processes in BC;

- That each health authority needs to establish a Diagnostic Imaging Quality Assurance Committee to provide oversight for diagnostic imaging services provided by the health authority;

- That the British Columbia Radiology Society (BCRS) needs to establish a teaching library of images reflecting difficult interpretations and common errors;

- That the BCRS, the Canadian Association of Radiologists (CAR) and the Royal College establish modality specific performance benchmarks for diagnostic radiologists that can be used in concurrent peer review monitoring.

Source: Cochrane 2011, recommendations 15-20.

The Canadian Association of Radiologists Guidelines

The recent cases have raised awareness among radiologists that they have to engage actively in quality control, in order to avoid situations where large-scale reviews are mandated by local or regional authorities.

The Canadian Association of Radiologists published a white paper in September 2011 to outline the requirements for peer review processes and suggest ways to integrate it into practice (Canadian Association of Radiologists 2011). This white paper came with a number of recommendations (see Table 1).

Table 1. Recommendations for Peer Review (Canadian Association of Radiologists 2011)

That the peer review process:

- reveals opportunities for quality improvement;

- helps improve individual outcomes;

- is a fair, unbiased, and consistent process;

- allow trends to be identified;

- must employ random selection of cases broadly representing the work done in a department;

- ensures the opinions of both the reviewers and the radiologists being reviewed are recorded;

- has minimal effect on workflow;

- allows easy participation;

- includes a reactive or proactive double reading with two physicians interpreting the same study;

- examinations must be representative of each physician’s specialty;

- must allow assessment of the agreement of the original report with subsequent review (or surgical or pathology findings);

- must use an approved classification of peer review findings with regard to level of quality concern;

- must have policies and procedures for action to be taken on significantly discrepant peer review findings for quality outcomes improvement.

Retrospective Peer Review. RADPEER™

Very few sites were using RADPEER™, the quality control solution developed by the American College of Radiology. One of our groups in Hamilton was among the first adopters in Canada.

The way RADPEER™ functions is to allow the capture of discrepancies in previous reports when available, while reporting the current case (American College of Radiology). Errors are rated on a scale from 1 to 4, where 1 is easily missed and 4 is obvious and should not have been missed; from 2 and above, there are 2 categories: (a) means no impact on patient management, (b) means that the miss may have created a risk to the patient.

But retrospective peer review solutions have limitations, the main one being its impact on patient care. With RADPEER™, the radiologist will randomly review the report of a previous exam when he/she reads a current exam. But this means that there must be a previous, and that this previous is usually old, more than six months, even up to two years, when it will be too late to take any corrective action. Then the radiology department will have to inform the referring physician and the patient that a mistake has been made, which of course is a disaster in case of malignancy, without taking into account all the legal implications which can arise.

Moreover, what happens if a case performed a few months ago had a wrong interpretation, but there has been no follow-up? Then this case will never be reviewed, with the potential consequences of a miss for the patient. Similarly, new patients, in particular emergency patients, will not see their images reviewed.

RADPEER™ was resented as punitive and not as the learning experience it should be, and radiologists had the feeling, sometimes justified, that the data could be used against them.

Prospective Peer Review

Many institutions across North America consider that the RADPEER™ model, which was a game changer when introduced 14 years ago, does not answer to their needs anymore, and that we should move away from retrospective peer review and rather adopt prospective peer review.

What is a prospective peer review? It is a review done by a peer before the report is finalised and sent to the referring physician, just in time for the radiologist to correct any misdiagnosis which could be relevant to patient care.

The benefits of a prospective peer review solution are multiple. With a configurable rate of sampling, the review is fully integrated in the workflow. Exams will be reviewed anonymously before the report is distributed on the network, by a radiologist of the same specialty, but based at another hospital. If the reviewer disagrees with the original radiologist, he/she will communicate his/her impression to the first radiologist, asking that he/she amends the report. If the two radiologists disagree, then the case will go to arbitration, which may defer the time the final report is released. Of course, turnaround time needs to be faster for emergency cases, which may sometimes be impractical, but not impossible, if the routing system is given the appropriate configuration. Overall, a prospective peer review solution does not impact productivity more than a retrospective system.

Anonymisation is important to eliminate bias and allow emotional separation between reporter and reviewer. However, it may sometimes be difficult to anonymise the patient information, as the name is incrusted in the ultrasound images or can be seen on the requisition, and the style of the reporting radiologist may be easily identified.

What is the Current Status?

After going from review to review, growing concerns in the population and loss of trust in the quality of radiologists (when it should be the reverse), what is the situation in Canada and how did we address the crisis?

-British Columbia, two years after the review, has selected a province-wide software solution, but is struggling with major IT issues and lack of standardisation, resulting in only one of the six health authorities having a working solution.

-Alberta has just issued a RFP (Request For Proposal) for a province-wide retrospective peer-review solution based on the Cochrane recommendations, and including teaching files to display difficult interpretations and common errors.

-SaskatchewanandManitoba have no provincial strategy at the moment, even if it is on the radar. Alberta, Saskatchewan and Manitoba run single province-wide PACS, which should facilitate implementation.

-Ontario has just created a committee, at the initiative of the Ministry of Health, to look at a Provincial solution; with multiple different regional networks which are not integrated and do not communicate, it probably will not be an easy task.

-Quebec has not published a provincial strategy at the moment.

-Nova Scotia has identified peer review as a priority, but has not implemented a solution yet.

-Newfoundland is also on hold, even if a provider has already been identified.

The McMaster Approach

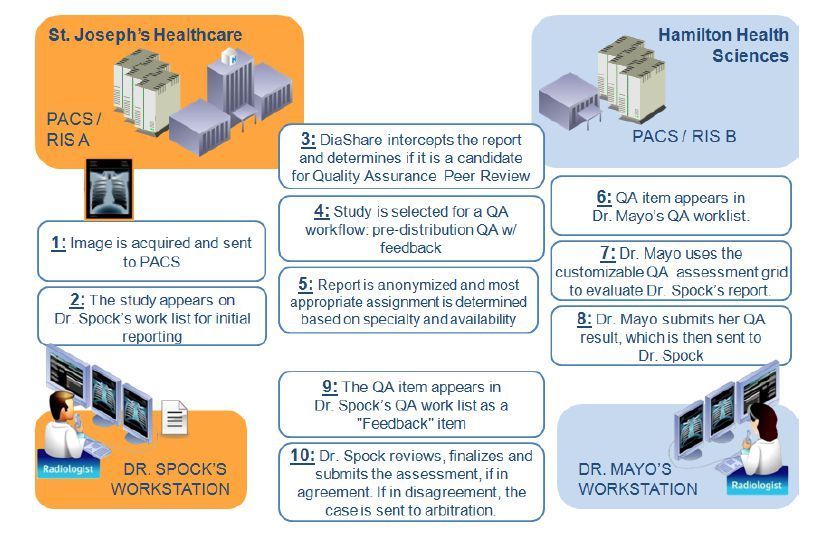

For us, at McMaster University, Hamilton, Ontario, we have implemented a prospective pre-report finalisation quality assurance solution (Koff et al. 2012) deployed across our four hospitals, and running on two different PACS (Picture Archiving and Communication System) and two different RIS (Radiology Information System), with ultimately a goal to make it available through our Local Health Integration Network (LHIN), which includes all hospitals in our region. Our solution is embedded in the workflow and fully automated. Radiologists from one site are reviewed by radiologists from other sites to maintain anonymisation and create emotional separation. The process takes into consideration individual subspecialties, and matches automatically readers and reviewers. It has been met with enthusiasm by all our radiologists, even the ones who were reluctant a few years ago.

Conclusion

A wave of highly publicised reviews of radiologists has triggered a swift reaction at the government level, with large-scale investigations. Although shocked, the radiology community has been slow to react, but has now understood the need to invest in peer review processes, even if at a cost of decreased productivity. The Canadian Association of Radiologists has issued guidelines for peer review available on its website, making suggestions but not imposing any definite system. Prospective peer review appears to be a better option than retrospective peer review in the interest of the patients we serve, as it allows detection of misses and errors before they are communicated. Peer review must be an educational and non punitive experience leading to overall improved delivery of care.