The ability to process huge quantities of visual electronic

data rapidly and efficiently following man-made and natural disasters could

spell the difference between life and death for survivors.

Visual data created by security cameras, mobile devices and aerial video provide useful data for first responders and law enforcement.

Such data is critical for detection of hazardous materials, knowing where to target emergency personnel and resources and tracking suspects in man-made disasters.

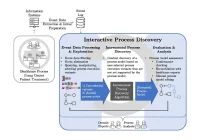

Computer science researchers from the University of Missouri have developed visual cloud computing architecture for streamlining processes involved in optimal use of data in emergencies.

"In disaster scenarios, the amount of visual data generated can create a bottleneck in the network," said Prasad Calyam, assistant professor of computer science in the MU College of Engineering. "This abundance of visual data, especially high-resolution video streams, is difficult to process even under normal circumstances. In a disaster situation, the computing and networking resources needed to process it may be scarce and even not be available. We are working to develop the most efficient way to process data and study how to quickly present visual information to first responders and law enforcement."

The research team have developed a framework for disaster incident data computation that connects the system to mobile devices in a mobile cloud. Algorithms assist in determining the information that needs to be processed by the cloud or by local devices. The architecture helps responders receive the information more swiftly by spreading the processing over multiple devices.

The team said that often first responders saw multiple images of the same spot from overlapping cameras whereas it was critical to see distinctive parts instead.

Source: http://www.eurekalert.org/pub_releases/2016-06/uom-vcc062316.php

Image Credit: Pixabay