HealthManagement, Volume 15 - Issue 3, 2015

Meeting the Challenges

Precursors of quality management, quality control and quality improvement initiatives have been known since the early 20th century. However, the industrial revolution and particularly the rapid prosperity of the automobile industry raised quality issues to a new dimension, which soon became an integral part of industrial process management. In the last decades quality management also entered the healthcare system, and quality initiatives specific to the various category groups quickly evolved (McLees et al. 2015).

Quality management in radiology is manifold. Focusing on the customer perspective, its goals can be summarised as providing safe, effective, efficient, patient-centred and equitable diagnostic and therapeutic radiological care. Radiation safety has become more and more important (Hricak et al. 2011; Huda, 2015), because due to an increase in the number of examinations using ionising radiation population doses from medical imaging have increased by 600% in a few decades (Boone et al. 2012; Mettler et al. 2009; Schauer and Linton, 2009).

Examinations t hat are based on the use of ionising radiation need to be performed adhering to the three fundamental principles of the International Commission for Radiation Protection (ICRP) of “justification, optimisation, and limitation” (International Commission on Radiological Protection 2007).

Justification involves that a well-trained individual assigns the most appropriate examination for a clinical indication, considering both the diagnostic information that is likely to be generated as well as the corresponding patient radiation doses and associated risks (ICRP, 2007; Sierzenski et al. 2014).

Thus justification aims to limit the number of unnecessary examinations and to provide a net patient benefit. The rationale of optimisation is similar to the ALARA (as low as reasonably achievable) principle (Huda, 2015; ICRP, 2007), implying that only that amount of radiation that is required to adequately address the diagnostic question must be applied. However, reduction of patient dose and risk should never be made at the expense of diagnostic imaging performance (McCollough et al. 2009). Rather the proportion of the informative value and the potential risks of an examination should be kept in good balance. The third principle, dose limitation, refers to dose limits that ought not to be exceeded, as otherwise an individual’s risk of suffering from stochastic dose effects (eg, radiation-induced cancer) would be unduly raised. Dose limitation is more of an issue in occupationally exposed individuals, who are obliged to permanently wear dosimeters in order to control radiation exposure and warrant keeping within annual dose limits as set by the responsible authorities. To stress the importance of radiation safety the European radiation protection legislation was updated lately, and now requires consequent monitoring of radiation exposure both of patients and medical staff (Council Directive 2013/59/ Euratom). All member states are obligated to transpose the Directive into national legislation and to implement its requirements by 2018 (Council Directive (EC) 2013/59/EURATOM; Mundigl, 2015).

Dose Monitoring Software

Characteristics

Regarding control of patient radiation exposure one option is implementation of dose monitoring software. Several vendors have released dose management tools in the last years, all of them allowing for registration, tracking and analysis of doses applied to patients, thus enabling monitoring compliance with the three fundamental ICRP principles.

Such software can be connected with any imaging device using ionising radiation. For computed tomography (CT) basic dose information of each patient and protocol includes the computed tomography dose index (CTDI), the doselength- product (DLP) as well as the sizespecific dose estimate (SSDE). After the scanning is finished the dose monitoring tool directly matches the dose data with predefined dose reference levels (DRLs), registers dose over time, and compares data of an individual patient with that of other patients, who underwent the same CT protocol. DRLs are dose values for indication-based examinations and are set by national authorities. They aim to provide guidance on what level of radiation protection is achievable with current competent practice and under the prevailing circumstances, but they are not constraints.

An important function of the software is that if the applied dose exceeds the DRL an alert tool transmits a message, which is visible on the survey page of the dose monitoring software. Therefore, radiographers immediately know after the scanning that dose limits were exceeded, and can place answers explaining the alerts within the comment box of the software. To assess dose performance for a defined period of time analysis of alert reasons and dose values should be made regularly (eg, once a month), thereby allowing for internal and external quality control and comparison with national or international reference values. Moreover there are possibilities to improve: if an alert is frequently caused by the same underlying reason (eg, patient not precisely positioned in the isocenter of the scanner), rectifying measures may be undertaken (for example, extra training for radiographers).

Implementation Considerations

Before planning implementation of dose monitoring software you should be aware of some challenges that need to be met. A dose monitoring tool is software, which offers many options, but the available features may not match your department’s expectations and requirements. Awareness of what exactly the department’s needs are is essential at the beginning. Furthermore, one should be conscious of the fact that the software indeed is able to register dose data, but it cannot check for plausibility of data. Warranting high quality of data input is necessary and ultimately determines the usability of data output.

Step 1: Determine Technical Strategy

If these basic challenges are accepted the next step is to determine your technical strategy, which includes choosing the right dose monitoring software for your requirements. Consideration of the different modalities that should be linked to the software is important, because not all software allows for connection with all modalities. Moreover, to ensure high quality of data input it should be verified that the software can communicate with the hospital information system (HIS) and radiology information system (RIS) and can also be integrated in the local network.

Step 2: Define Organisational Strategy

The next step is to define your organisational strategy, which comprises not only assigning the modalities, but also specifying the scanners/units that ought to be connected with the software. This is important, because within a department not all scanners/units may be from the same vendor and there might be differences concerning connection possibilities. This point also includes considerations about installation of the dose monitoring tool outside the radiology department, where x-rays are used as well (eg, coronary angiography suite). Determination of one’s organisational strategy should also involve clearly setting the goals and expectations coming with the software, because implementation of a dose monitoring tool means extra work that so far is not financially compensated. You should be prepared to be confronted with internal resistance from colleagues owing to reasons such as reluctance to change, lack of time, lack of awareness of the necessity to change and fear of the unknown. Therefore, support, backing, and sponsorship by the head of the department are crucial.

To successfully implement the software in clinical routine it is advisable to start with one modality only, which preferably should be CT, because CT scans are more standardised than for example fluoroscopy- guided procedures, at which various levels of difficulties need to be considered. Moreover, in most countries national DRLs for indication-based CT examinations are available, which facilitate setting dose thresholds.

Dose Team

To promote implementation of the software, represent dose culture and have contact persons, formation of a dose team is recommended. Ideally this should be composed of one or two radiographers, one board-certified radiologist and the department’s IT specialist. Together with the head of the department the dose team should define a few appropriate, measurable, and achievable goals. As particularly at the beginning the dose team faces many tasks, including becoming familiar with the software, they should have protected time for their work. One of their first challenges is to set reasonable dose reference levels; in our department we either used Swiss DRLs, so far available for 21 indicationbased CT examinations (Swiss Federal Authority of Healthcare 2010), or we derived thresholds by determining the 75th percentile of the distribution of a defined dosimetric quantity.

Lessons Learnt

After we had installed the dose monitoring software and had started dose data analysis of our CT scanners, we had to solve unanticipated problems.

1. Data Output Relates to Input Quality

Although we knew that a dose monitoring tool is software, we were not aware that data output depends extensively on the quality of the input. One of our main challenges was to match our own CT protocols with the available national DRLs. For example, our abdominal CT protocols comp rise “abdomen and pelvis: unenhanced”, “abdomen and pelvis: contrast media-enhanced”, “liver protocol”, “pancreas protocol” etc., and national DRLs are separated into “abdomen 1: liver, spleen, pancreas, vessels” or “abdomen 2: standard, abscess, emergency”. Thus our internal processes required intensive adaptation at the beginning, which included cleaning our CT protocol list with removal of no longer employed CT protocols (eg, from former scanners), definition of precise protocol descriptions and uniform usage of protocol names, because for the software the protocol name “unenhanced abdomen” is not synonymous with “abdomen unenhanced”. Thereafter the different CT protocols were assigned to the national DRLs, if available, or to our own set thresholds.

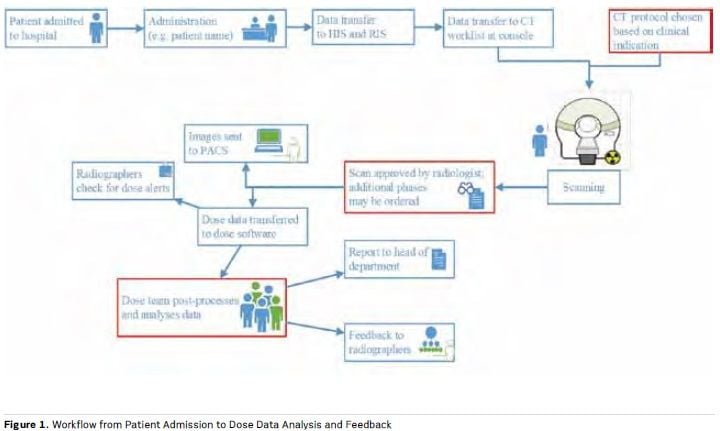

2. Protocol Changes Not Recognised

When we started with data analysis we frequently encountered the problem that the software did not recognise changes of protocol made after scanning had already started. For example, a patient with rectal carcinoma was enrolled for a CT of the abdomen and, based on this indication, the CT protocol “abdomen standard (single phase)” was chosen. But due to a so far unknown liver lesion a second phase was ordered by the radiologist on approval of the scan. However, in this case the software compares the scan’s dose data with the DRL for “abdomen standard”, unless the protocol name is changed manually to “abdomen portal-venous and delayed phase”. This modification of protocol name is possible within the software as part of the post-processing, and considerably enhances quality of data analysis by limiting the number of false-positive dose alerts. Figure 1 provides a survey of the different processes involved in radiation safety quality control.

3. Change Resistance

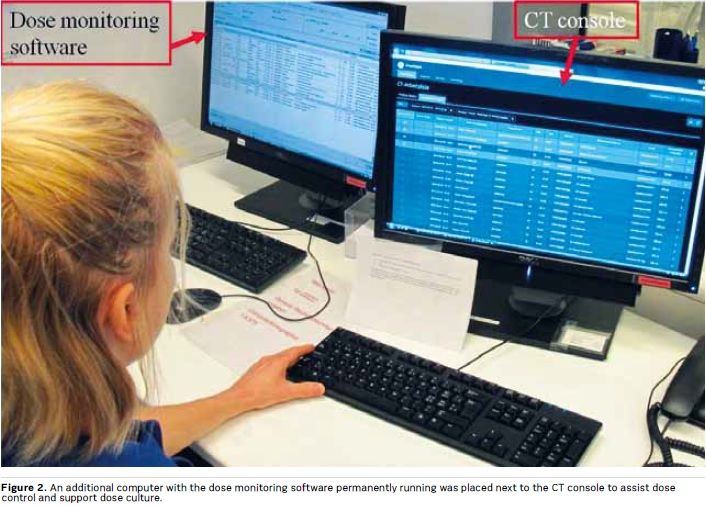

Particularly at the beginning, resistance to change is often encountered, based on perceived nuisance and extra work, but also due to neglect when a task was not part of clinical routine before. To overcome this resistance and improve compliance it is important to integrate dose monitoring into the daily workflow and to establish a dose culture. We therefore placed an additional computer next to the CT console, on which the software was permanently running (see Figure 2). By immediately displaying the patient dose data, the radiographers’ awareness regarding radiation safety increased.

4. Optimisation Processes

After having successfully implemented the software in clinical routine, dose data should be collected for several months before optimisation processes are started. The reason is that optimisation ought to be based on valid data, which are the premise to achieve effective and efficient improvements. It is better to first focus on one modality as well as on the most frequent protocols, as too many changes made at one point may cause confusion, data disorder, and excessive demands of the staff, ultimately leading to failure of the whole dose monitoring project.

5. RIS Integration

Despite being challenging at the beginning there are several advantages that compensate for the efforts to integrate the dose monitoring tool into the RIS. Among these especially the automatic registration of protocol changes during the scanning is valuable because it considerably alleviates dose data post-processing and analysis (no manual change of protocol name is required) and improves quality of data output. The RIS integration also allows for an automatic display of dose data on each radiological exam report and would enable the use of only one single master IT-system, thus significantly enhancing the convenience when dose monitoring software is applied.

Conclusions

Dose monitoring software is a valuable tool for internal and external quality control of dose data. It can be successfully integrated in clinical routine and increases patient and business safety. However, implementation of a dose monitoring tool is a demanding task that requires the support of the head of the department. It is advisable to build a multidisciplinary dose team, which assists in software integration in daily routine and accomplishes a dose culture. It should always be kept in mind that the tool is a software with the quality of data output largely relying on data input. Because of that dose culture and processes have to be created and implemented by the users, which needs time and resources.

References:

Boone JM, Hendee WR, McNitt-Gray MF et al. (2012) Radiation exposure from CT scans: how to close our knowledge gaps, monitor and safeguard exposure- -proceedings and recommendations of the Radiation Dose Summit, sponsored by NIBIB, February 24-25, 2011. Radiology, 265(2): 544–54.

Council Directive (EC) 2013/59/ EURATOM of 5 December 2013 laying down basic safety standards for protection against the dangers arising from exposure to ionising radiation, and repealing Directives 89/618/Euratom, 90/641/ Euratom, 96/29/Euratom, 97/43/Euratom and 2003/122/Euratom. [Accessed: 30 October 2014] Available from: http:// eur-lex.europa.eu/legal-content/EN/ ALL/?uri=CELEX:32013L0059

Hricak H, Brenner DJ, Adelstein SJ et al. (2011) Managing radiation use in medical imaging: a multifaceted challenge. Radiology, 258(3): 889–905. Huda W (2015) Radiation risks: what is to be done? AJR Am J Roentgenol, 204(1): 124–7.

International Commission on Radiological Protection (ICRP) (2007) The 2007 recommenda- tions of the International Commission on Radiological Protection. ICRP Publication 103. Ann ICRP, 37(2-4):1-332.

McCollough CH, Guimarães L, Fletcher JG (2009) In defense of body CT. AJR Am J Roentgenol, 193(1): 28–39.

McLees AW, Nawaz S, Thomas C et al. (2015) Defining and assessing quality improvement outcomes: a framework for public health. Am J Public Health, 105(Suppl 2): S167–73.

Mundigl S (2015) Modernisation and consolidation of the European radiation protection leg-islation: the new EURATOM Basic Safety Standards Directive. Radiat Prot Dosimetry, 164(1- 2): 9-12.

Schauer DA, Linton OW (2009) National Council on Radiation Protection and Measurements report shows substantial medical exposure increase. Radiology, 253(2): 293–6.

Sierzenski PR, Linton OW, Amis ES et al. (2014) Applications of justification and optimization in medical imaging: examples of clinical guidance for computed tomography use in emergency medicine. J Am Coll Radiol, 11(1): 36–44.

Simeonov G (2015) European activities in radiation protection in medicine. Radiat Prot Do-simetry, Apr 12, pii: ncv031. [Epub ahead of print].

Bundesamt für Gesundhei [Swiss Federal Authority of Healthcare] (2010) Diagnostische Referenzwerte in der Compu ter tomographi e Merkbl a t t [Diagnostic reference levels in computed tomography]. [Accessed: 4 June 2015] Available from http://www.bag.admin. ch/themen/strahlung/10463/10958/