If we understand why people make mistakes, we can build systems appropriately, said Ken Catchpole, Director of Surgical Safety and Human Factors Research at Cedars-Sinai Medical Center in Los Angeles, at the opening day of the UKRC in Manchester, UK. Getting a packed plenary session to stand on one leg, circle the other foot clockwise and draw the number 6 in the air was an arresting way to show how the human brain is predisposed to error.

Catchpole quoted Dekker, "Human error is an inevitable byproduct of the pursuit of success in imperfect, unstable, resource constrained world.

The statistics are well known - around 10% of patients experience an error associated with hospitalised care. And in 1 in 300 cases some error contributes to an adverse event or death. People don't change that much across different industries, and Catchpole's research focuses on why people do what they do in complex systems.

The Swiss cheese model has layers of primary, secondary and last defence. But when the 'holes' line up, errors occur. When something goes wrong it is natural to blame individuals, when it might be the system itself.

Humans are uniquely able to function in uncertainty and make trade-offs. In fact, it is humans who create safety in complex systems, asserted Catchpole. A complex system is inherently unsafe, always functions at the limits of its capacity, and requires safety to be traded for other aspects of system performance. Catchpole focuses on the human factors model, where people are at the centre. People's performance is influenced by the organisation, their tasks, the environment and technology. Rather than just train people and improve their awareness of safety, all these factors are available to understand and change.

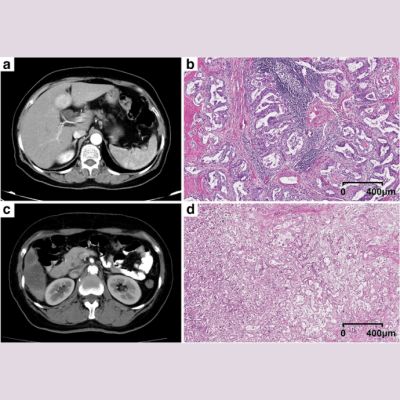

Giles Boland, Professor of Radiology, Harvard Medical School and Vice Chair, Dept of Radiology, Massachusetts General Hospital in Boston focused on the whole of the imaging chain, from appointment through to distribution of the radiology report.

Boland believes that radiology is poor at recognising and addressing errors. Errors are pervasive, and have a major impact on outcomes and value. By looking at improving every part of the imaging chain, errors can be minimised.

It is natural to underperform, and we make mistakes all the time, in every stage of the process. Radiologists typically think of errors as occurring at the reporting stage, such as missing findings or interpretation errors. However, there is potential for error in every part of the chain. Part of the problem is measuring the 'wrong' metrics, including report turnaround time, hospital length of stay, volume throughput. In the USA this is changing to value-driven, outcomes measurement, looking at patient experience and safety, care coordination, preventative health and health of at risk and elderly patients.

Marked variations in practices contribute to error. For example, dose variation in CT scans. Protocols can vary from one radiologist to another. Interobserver and intraobserver variation in reporting is also well-known. Imagine banking was medicine, said Boland. You put your card in the ATM and are given a different amount of money every time.

These variations and potential for error have some solutions, said Boland. Clinical decision support tools at the point of care will help to remove variance and promote consistency. Structured reporting will assist referring doctors and enable data mining. "We are in the information business, remember", said Boland.

Latest Articles

If we understand why people make mistakes, we can build systems appropriately, said Ken Catchpole, Director of Surgical Safety and Human Factors Research a...